The week in AI: Google goes all out at I/O as laws creep up

[ad_1]

Maintaining with an trade as fast-moving as AI is a tall order. So till an AI can do it for you, right here’s a helpful roundup of the final week’s tales on the earth of machine studying, together with notable analysis and experiments we didn’t cowl on their very own.

This week, Google dominated the AI information cycle with a variety of recent merchandise that launched at its annual I/O developer convention. They run the gamut from a code-generating AI meant to compete with GitHub’s Copilot to an AI music generator that turns textual content prompts into quick songs.

A good variety of these instruments look to be respectable labor savers — greater than advertising fluff, that’s to say. I’m significantly intrigued by Mission Tailwind, a note-taking app that leverages AI to prepare, summarize and analyze recordsdata from a private Google Docs folder. However in addition they expose the constraints and shortcomings of even the perfect AI applied sciences at present.

Take PaLM 2, for instance, Google’s latest massive language mannequin (LLM). PaLM 2 will energy Google’s up to date Bard chat device, the corporate’s competitor to OpenAI’s ChatGPT, and performance as the muse mannequin for many of Google’s new AI options. However whereas PaLM 2 can write code, emails and extra, like comparable LLMs, it additionally responds to questions in poisonous and biased methods.

Google’s music generator, too, is pretty restricted in what it may accomplish. As I wrote in my fingers on, many of the songs I’ve created with MusicLM sound satisfactory at finest — and at worst like a four-year-old let free on a DAW.

There’s been a lot written about how AI will change jobs — probably the equal of 300 million full-time jobs, based on a report by Goldman Sachs. In a survey by Harris, 40% of employees accustomed to OpenAI’s AI-powered chatbot device, ChatGPT, are involved that it’ll change their jobs fully.

Google’s AI isn’t the end-all be-all. Certainly, the corporate’s arguably behind within the AI race. However it’s an indisputable fact that Google employs some of the top AI researchers in the world. And if that is the perfect they’ll handle, it’s a testomony to the truth that AI is way from a solved downside.

Listed here are the opposite AI headlines of notice from the previous few days:

- Meta brings generative AI to adverts: Meta this week introduced an AI sandbox, of kinds, for advertisers to assist them create different copies, background era via textual content prompts and picture cropping for Fb or Instagram adverts. The corporate stated that the options can be found to pick advertisers in the mean time and can increase entry to extra advertisers in July.

- Added context: Anthropic has expanded the context window for Claude — its flagship text-generating AI mannequin, nonetheless in preview — from 9,000 tokens to 100,000 tokens. Context window refers back to the textual content the mannequin considers earlier than producing extra textual content, whereas tokens signify uncooked textual content (e.g., the phrase “unbelievable” can be break up into the tokens “fan,” “tas” and “tic”). Traditionally and even at present, poor reminiscence has been an obstacle to the usefulness of text-generating AI. However bigger context home windows might change that.

- Anthropic touts ‘constitutional AI’: Bigger context home windows aren’t the Anthropic fashions’ solely differentiator. The corporate this week detailed “constitutional AI,” its in-house AI coaching approach that goals to imbue AI programs with “values” outlined by a “structure.” In distinction to different approaches, Anthropic argues that constitutional AI makes the conduct of programs each simpler to grasp and less complicated to regulate as wanted.

- An LLM constructed for analysis: The nonprofit Allen Institute for AI Analysis (AI2) introduced that it plans to coach a research-focused LLM referred to as Open Language Mannequin, including to the massive and rising open supply library. AI2 sees Open Language Mannequin, or OLMo for brief, as a platform and never only a mannequin — one which’ll enable the analysis group to take every element AI2 creates and both use it themselves or search to enhance it.

- New fund for AI: In different AI2 information, AI2 Incubator, the nonprofit’s AI startup fund, is revving up once more at 3 times its earlier measurement — $30 million versus $10 million. Twenty-one firms have handed via the incubator since 2017, attracting some $160 million in additional funding and not less than one main acquisition: XNOR, an AI acceleration and effectivity outfit that was subsequently snapped up by Apple for round $200 million.

- EU intros guidelines for generative AI: In a sequence of votes within the European Parliament, MEPs this week backed a raft of amendments to the bloc’s draft AI laws — together with deciding on necessities for the so-called foundational fashions that underpin generative AI applied sciences like OpenAI’s ChatGPT. The amendments put the onus on suppliers of foundational fashions to use security checks, information governance measures and threat mitigations previous to placing their fashions in the marketplace

- A common translator: Google is testing a robust new translation service that redubs video in a brand new language whereas additionally synchronizing the speaker’s lips with phrases they by no means spoke. It might be very helpful for lots of causes, however the firm was upfront about the potential for abuse and the steps taken to stop it.

- Automated explanations: It’s typically stated that LLMs alongside the traces of OpenAI’s ChatGPT are a black field, and definitely, there’s some fact to that. In an effort to peel again their layers, OpenAI is developing a device to mechanically determine which elements of an LLM are chargeable for which of its behaviors. The engineers behind it stress that it’s within the early phases, however the code to run it’s out there in open supply on GitHub as of this week.

- IBM launches new AI providers: At its annual Suppose convention, IBM introduced IBM Watsonx, a brand new platform that delivers instruments to construct AI fashions and supply entry to pretrained fashions for producing pc code, textual content and extra. The corporate says the launch was motivated by the challenges many companies nonetheless expertise in deploying AI throughout the office.

Different machine learnings

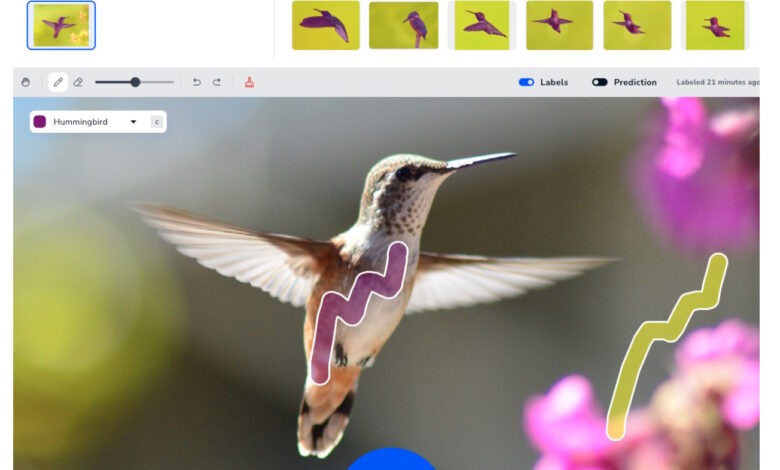

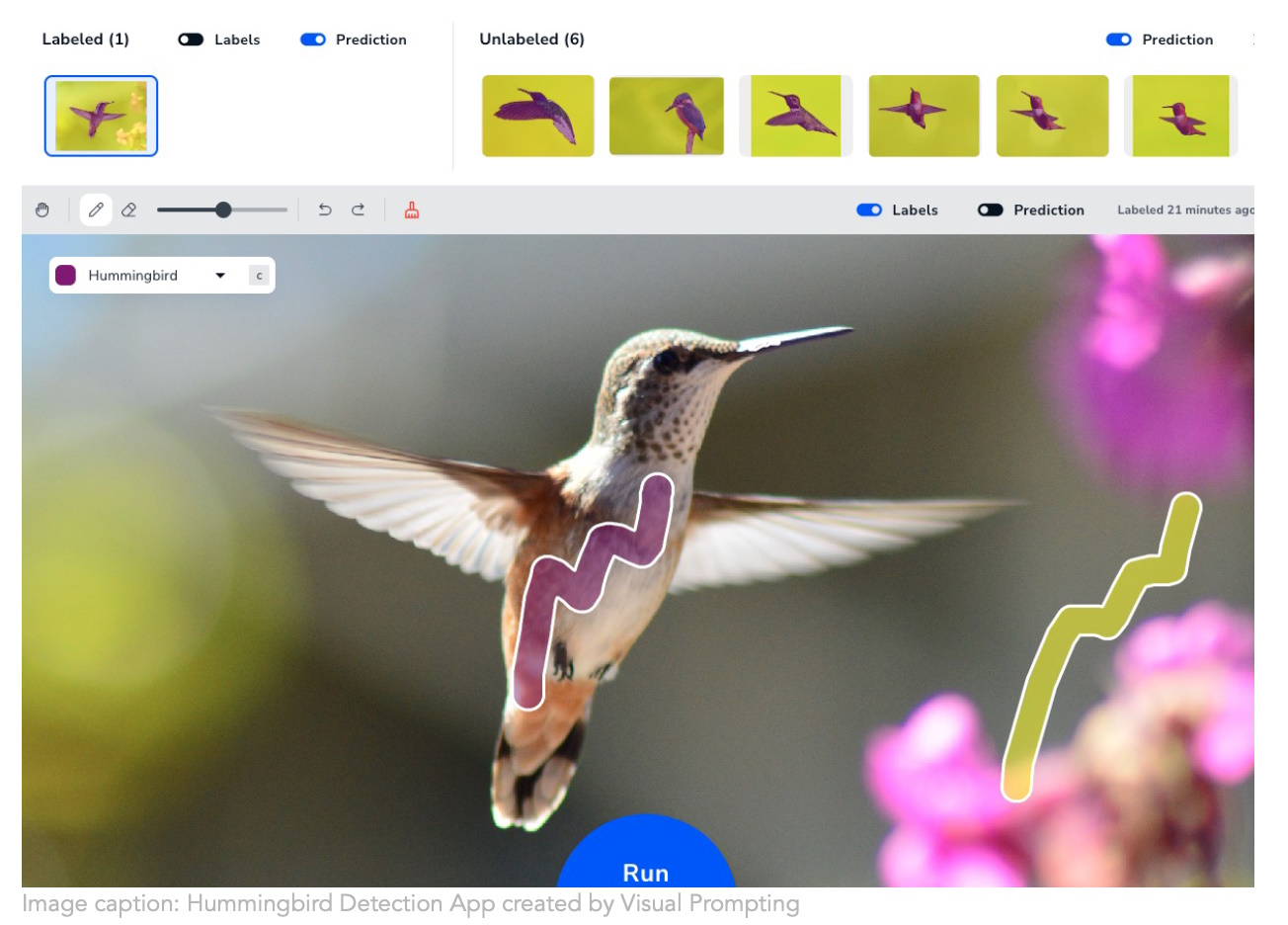

Picture Credit: Touchdown AI

Andrew Ng’s new firm Landing AI is taking a extra intuitive strategy to creating pc imaginative and prescient coaching. Making a mannequin perceive what you need to determine in pictures is fairly painstaking, however their “visual prompting” technique helps you to simply make a number of brush strokes and it figures out your intent from there. Anybody who has to construct segmentation fashions is saying “my god, lastly!” In all probability lots of grad college students who presently spend hours masking organelles and family objects.

Microsoft has utilized diffusion models in a unique and interesting way, primarily utilizing them to generate an motion vector as an alternative of a picture, having skilled it on a lot of noticed human actions. It’s nonetheless very early and diffusion isn’t the apparent resolution for this, however as they’re steady and versatile, it’s attention-grabbing to see how they are often utilized past purely visible duties. Their paper is being introduced at ICLR later this yr.

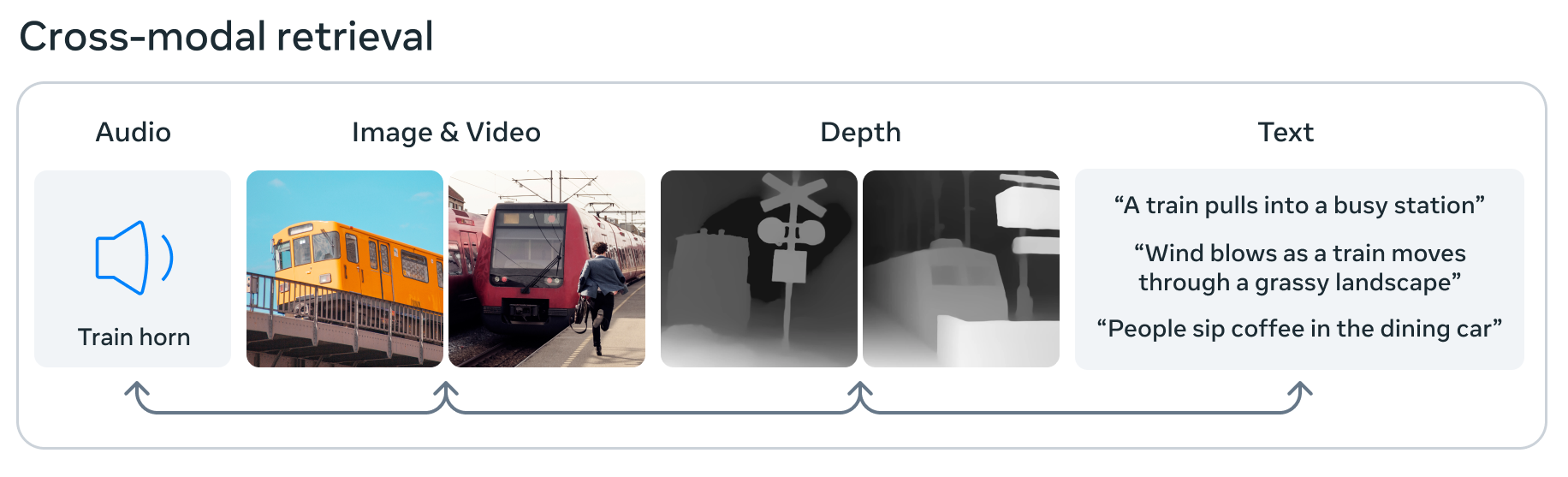

Picture Credit: Meta

Meta can also be pushing the sides of AI with ImageBind, which it claims is the primary mannequin that may course of and combine information from six totally different modalities: pictures and video, audio, 3D depth information, thermal data, and movement or positional information. Because of this in its little machine studying embedding house, a picture could be related to a sound, a 3D form, and varied textual content descriptions, any one in every of which might be requested about or used to decide. It’s a step in direction of “normal” AI in that it absorbs and associates information extra just like the mind — but it surely’s nonetheless primary and experimental, so don’t get too excited simply but.

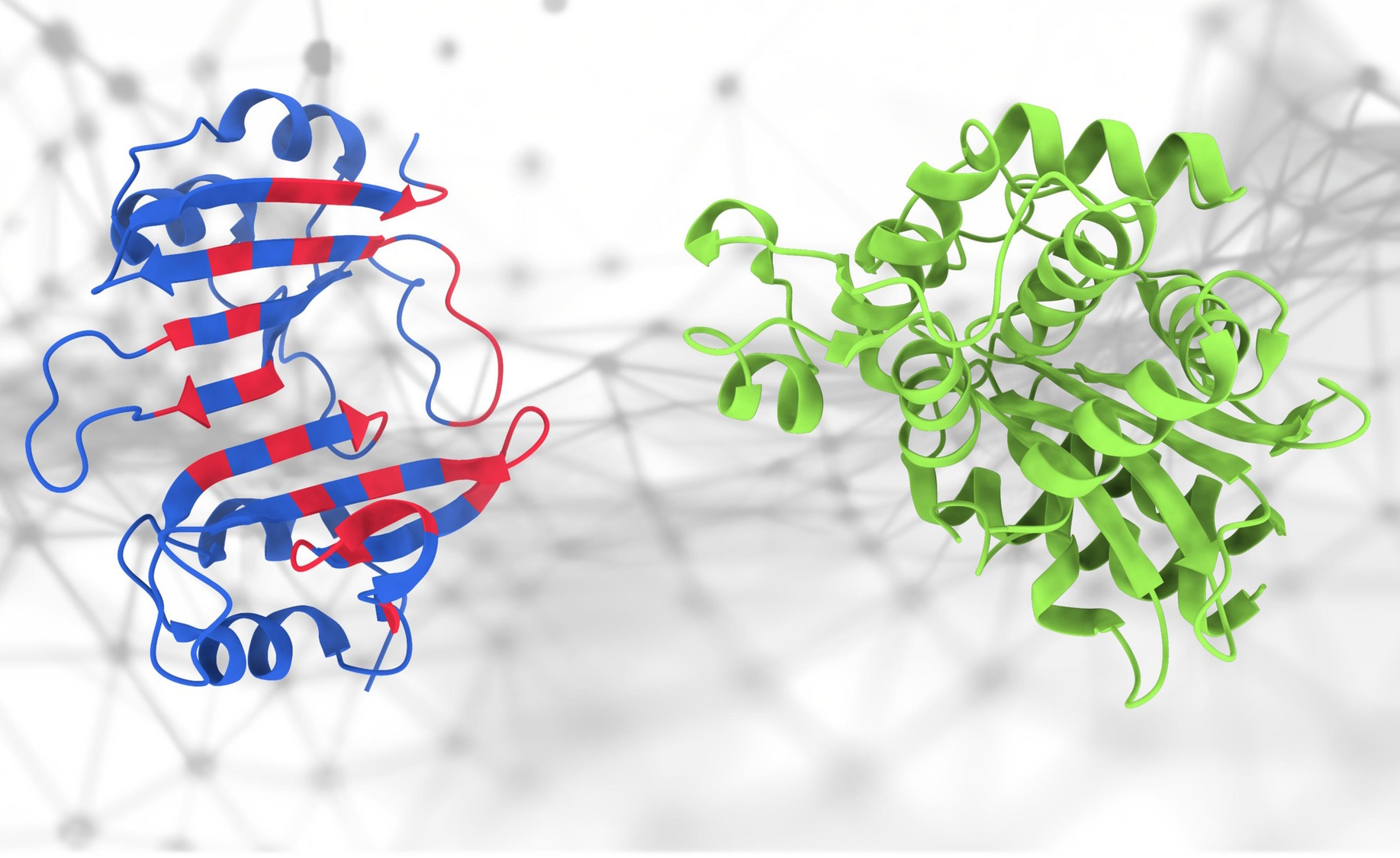

If these proteins contact… what occurs?

Everybody received enthusiastic about AlphaFold, and for good purpose, however actually construction is only one small a part of the very advanced science of proteomics. It’s how these proteins work together that’s each vital and tough to foretell — however this new PeSTo model from EPFL makes an attempt to do exactly that. “It focuses on important atoms and interactions throughout the protein construction,” stated lead developer Lucien Krapp. “It signifies that this technique successfully captures the advanced interactions inside protein buildings to allow an correct prediction of protein binding interfaces.” Even when it isn’t actual or 100% dependable, not having to start out from scratch is tremendous helpful for researchers.

The feds are going massive on AI. The President even dropped in on a meeting with a bunch of top AI CEOs to say how vital getting this proper is. Perhaps a bunch of firms aren’t essentially the proper ones to ask, however they’ll not less than have some concepts price contemplating. However they have already got lobbyists, proper?

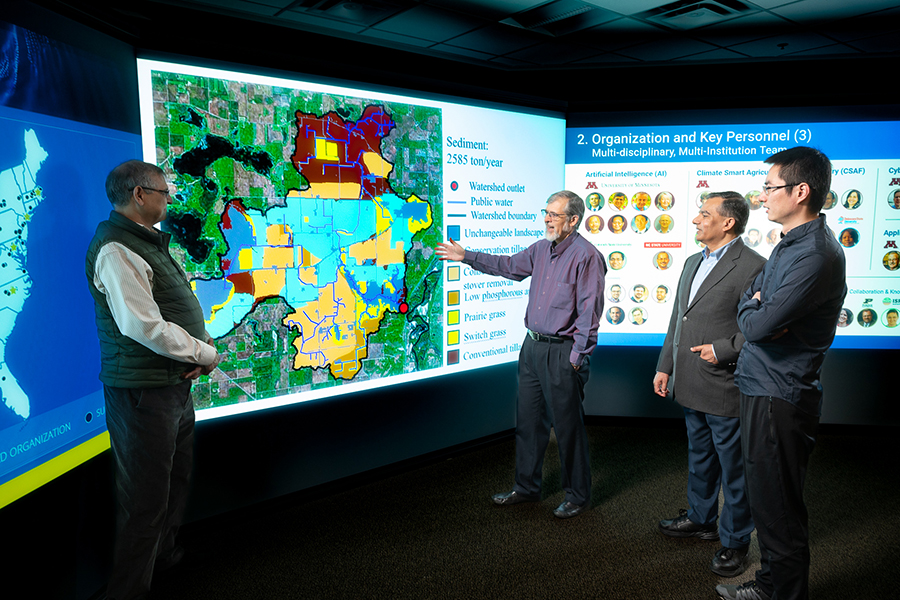

I’m extra excited in regards to the new AI research centers popping up with federal funding. Fundamental analysis is massively wanted to counterbalance the product-focused work being completed by the likes of OpenAI and Google — so when you’ve AI facilities with mandates to analyze issues like social science (at CMU), or local weather change and agriculture (at U of Minnesota), it appears like inexperienced fields (each figuratively and actually). Although I additionally need to give just a little shout out to this Meta research on forestry measurement.

Doing AI collectively on a giant display — it’s science!

A lot of attention-grabbing conversations on the market about AI. I assumed this interview with UCLA (my alma mater, go Bruins) academics Jacob Foster and Danny Snelson was an attention-grabbing one. Right here’s an excellent thought on LLMs to fake you got here up with this weekend when individuals are speaking about AI:

These programs reveal simply how formally constant most writing is. The extra generic the codecs that these predictive fashions simulate, the extra profitable they’re. These developments push us to acknowledge the normative capabilities of our types and probably remodel them. After the introduction of images, which is excellent at capturing a representational house, the painterly milieu developed Impressionism, a mode that rejected correct illustration altogether to linger with the materiality of paint itself.

Positively utilizing that!

[ad_2]

Source link